Today’s guest blogger is Simon Margolis, Senior Solutions Engineer at SADA. Simon works to design business solutions, focusing on the Google ecosystem.

As SADA helps it’s customers find new ways to leverage cloud technologies, we’ve had the unique opportunity to engineer “soup to nuts” solutions which take advantage of multiple portions of Google’s Cloud Platform. Google’s platform is unique in that there are many services (SaaS, PaaS, and IaaS) living within the same Google ecosystem, allowing for fantastic interoperability between the various services at amazing speeds. Below are a few examples of how SADA is leveraging this unique Cloud Platform to stay ahead of the curve and provide the best overall solutions to our customers.

Cloud File Server Migrator (CFSM)

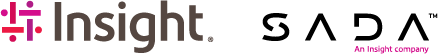

Some customers have data they’d like to migrate to Google Drive. Typically we will deploy tools within the data’s environment in order to push it into Google’s cloud. However some customers have very large data sets or limited machine/network resources preventing or seriously hindering the ability to push data into the cloud via these conventional methods. As a result, we developed the CFSM tool which utilizes Google Cloud Storage (GCS) as well as Google Compute Engine (GCE). We move the data set to GCS via offline methods, thus giving us the ability to work with that data from within GCE. Since both services are in the same Google ecosystem, data transfer from one to the other is extremely rapid.

With GCE’s ability to mount a single disk to multiple instances quickly, we’re then able to spin up multiple machines (as dictated by data size) running our migration tools which can push multiple chunks of data into Google Drive in parallel. Once again, because Google Drive is in the same ecosystem as GCE and GCS, migration speeds are shockingly speedy.

SADA Data Migrator

SADA and Google offer a number of standard migration tools for moving mail from legacy mail servers to Gmail. This is typically a core part of any Google Apps project. However, with the adoption of Google Vault for email archiving picking up, a greater demand for email archive migration has materialised. Often times, email archives stored by legacy archiving solutions are kept in proprietary databases or non-standard file types.

This hurdle inspired the creation of the cloud-based SADA Data Migrator. By taking advantage of often unused egress bandwidth at customer sites, we’re able to use a tool to break customer data in whatever format into chunks which are then uploaded to GCS in a parallelized process. Once this data is securely in GCS, we’re able to spin up any number of GCE instances and assign a chunk of data to that instance for processing. Leveraging the power of distributed, on-demand instances in GCE, we’re able to tear apart the proprietary or otherwise non-RFC822 compliant data efficiently and quickly. We’re then able to use those same distributed instances to pump data (converted to MIME format) into Google Vault. Once again, the proximity of GCS, GCE, and the Google Apps suite (SaaS), data transfer is extremely rapid.

As a result of this process, SADA have developed an expansive tool set for provisioning, controlling, monitoring, reporting, and retrieving data from GCE instances. This has allowed us to repurpose the SADA Data Migrator for other tasks involving the need break up or otherwise process data which then must be migrated into a cloud-based solution. We’re excited to apply this technology to future projects, pushing the limits of what can be done with cloud-based distributed computing.

SADA Cloud SSO (CSSO)

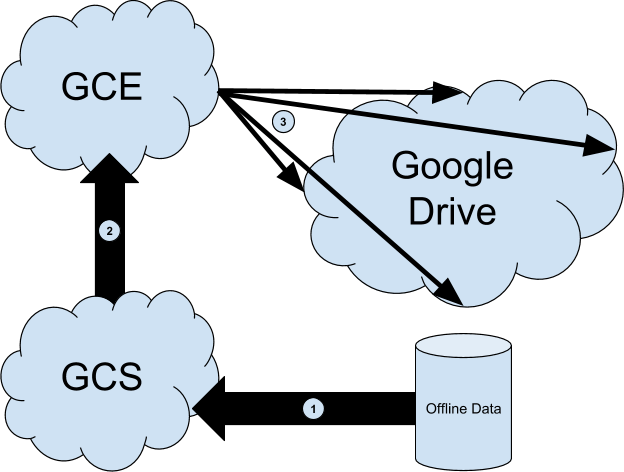

Google’s App Engine (GAE) is a fantastic PaaS solution which can be used to host web applications among other things. Naturally, upon the maturity of GAE, SADA looked to move our Single Sign-On for Google Apps (SSO) to the cloud. SSO pages are often the single point of failure in the authentication process for a Google Apps user. Should the web page cease functioning or otherwise become unavailable, no authentication requests can be sent to the customer’s Identity Management solution (IDM) and therefore no authorization responses can be sent to Google Apps. By placing this web application in GAE, the “behind the scenes” logistics of keeping a HA web server up and running are taken out of the equation; the solution simply works.

One major obstacle in implementing this type of security-conscious application in the cloud was communicating with customer IDMs. Specifically, our CSSO solution needs to communicate with customer LDAP servers via LDAPS. One of the beautiful features of a PaaS solution is that the developer need not worry about the underlying machines running an application; however in this case, without knowledge of the machine (often times many virtual machines are simultaneously hosting the application) we cannot direct customers on how to grant us access to their LDAP servers. Of course a firewall could always be configured to allow anyone on the internet to connect, relying exclusively on application-layer authentication, however we felt this was not the most secure option and therefore could not suggest it to our customers.

Instead we decided to have App Engine talk to GCE, which allows instances to have dedicated IP addresses. We could then provide this dedicated IP address to our customers, limiting exposure in their firewalls. We send requests from GAE to GCE which in turn then request authentication information from a customer’s LDAP server. The reply is once again sent from GCE to GAE and gives the CSSO application all of the needed information.

The real magic is in the logistics of coordinating the GCE portion of this solution. Naturally we could spin up many GCE instances to handle the maximum anticipated load on authentication requests. However with many customers, load peaks at specific times of the day and on certain days (say 9 AM Monday through Friday for example). Therefore running all of those instances constantly would be highly cost ineffective. Alternatively running too few instances would cause performance degredation, also unacceptable. On the GAE side, one of the benefits of a PaaS solution is that resources are allocated and deallocated as needed, without any developer intervention. This is not true of our dedicated GCE instances.

The solution here was to create yet another application in App Engine which controlled our GCE instances. By running Google Analytics on our CSSO page we are able to determine load at a given time. This also allows us to properly predict load for a given time, which is a real breakthrough in ensuring efficient resource allocation. Our controlling GAE application ingests the Analytics data and is then able to provision and deprovision GCE instances as needed. This means that we pay for exactly enough compute power at a given moment; no more and no less.